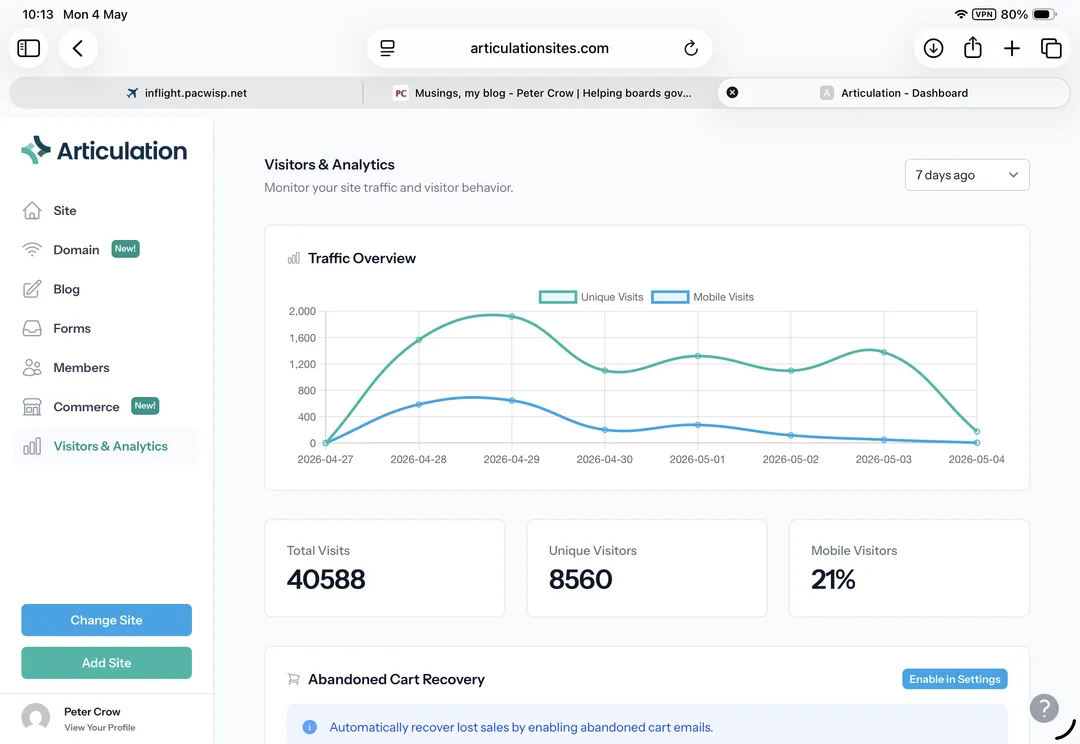

Have you ever wondered who is looking at your website, and why? My new website was published seven days ago (well, a very similar website), so I decided to look at the analytics, to get an idea.

To my astonishment, some 40,600 total visits (page hits) have been recorded over the past seven days, from just over 8500 unique visitors. Extrapolated, that points to over two million page hits per year.

This sounds impressive. I’m not convinced, and closer inspection shows the numbers are not quite what they seemed at first glance. When ‘include Crawlers/Bots’ is de-selected, a clearer picture emerges: the total visitor count drops to 10600-odd. That about three quarters of the traffic to petercrow.com is not by or from real people is good to know. That they are AI-tools and other systems, hoovering around collecting data justifies our investment in appropriate security. That one-in-five visits is from a mobile device suggests our selection of a tool that provides desktop-, tablet-, and mobile-friendly display options—automatically—was a good decision too.

Turning to the ‘real visitors’ now. If one-in-four Unique Visitors are not bots, about 2100 people visited the some part of the site over the past seven days. Some (most?) will have been curious about the new site. But others looked at one or more Musings articles; and some have checked some other aspect of the capabilities and credentials material.

Even if one or two per cent of these ‘real people’ are genuinely interested (20 per week), and ten per cent of these get in touch, my decades-long quest (to provoke candid conversations to help boards can govern with impact) has, probably, been worthwhile. Onward.